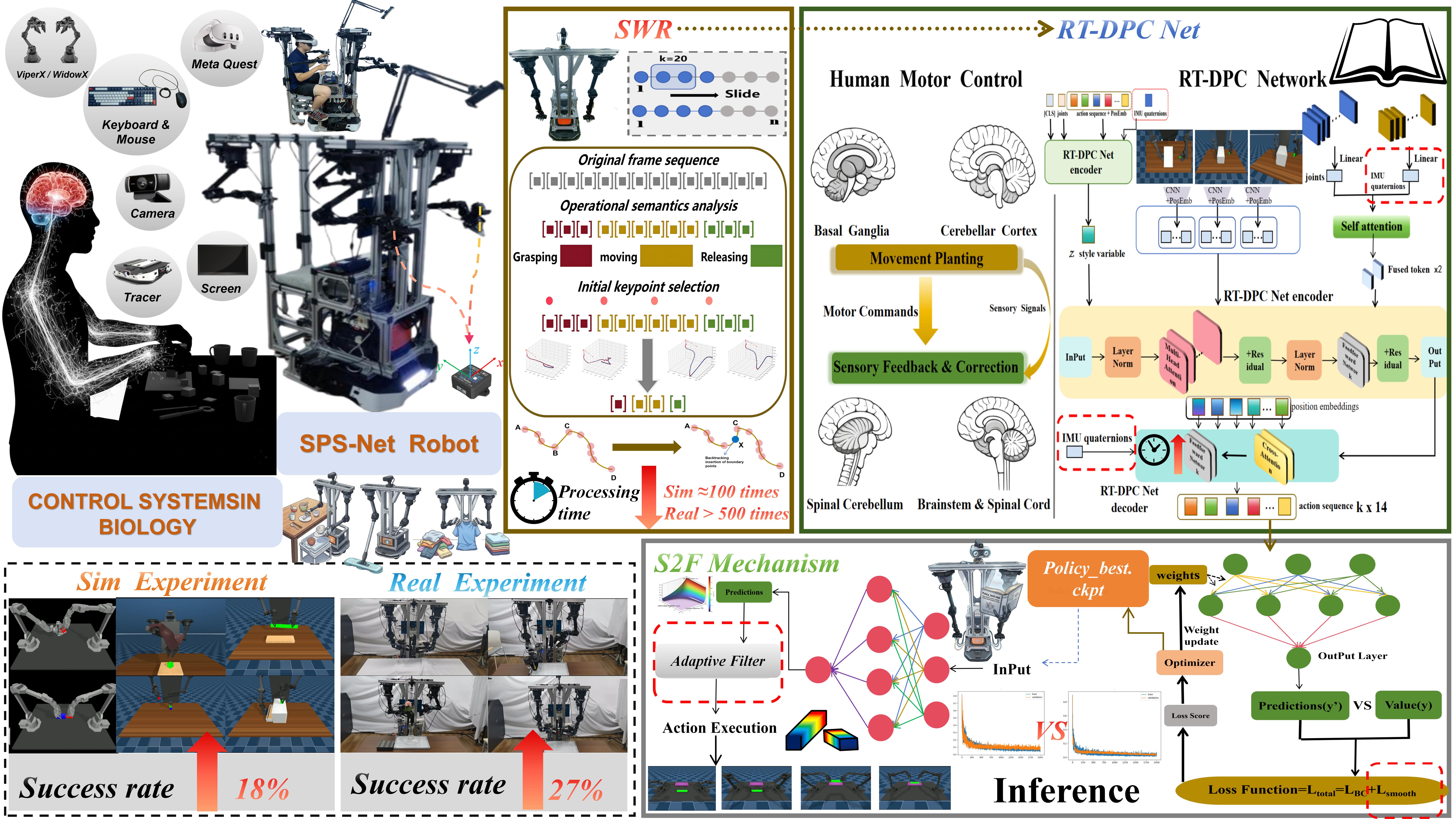

NeuroSPS: A Sensorimotor-Inspired Policy for Semantic, Proprioceptive, and Smooth Robotic Manipulation

Abstract

To address data redundancy, fine manipulation errors, poor disturbance rejection, and execution jitter in Vision-Language-Action (VLA) models and end-to-end imitation learning for long-horizon tasks, this paper proposes NeuroSPS, a neuro-inspired manipulation framework integrating human sensorimotor mechanisms. It introduces three core algorithmic and control innovations:

- First, the Semantic Window Refiner (SWR) algorithm combines a sliding window with operational semantics detection to reduce trajectory preprocessing complexity from O(n2) to O(kn). It achieves high-fidelity extraction of intent-critical frames and accelerates data processing by ~100x, effectively overcoming the bottleneck of massive demonstration data.

- Second, the Real-Time Dual-Path Proprioceptive Correction Network (RT-DPC Net) overcomes the perception limitations of visual feedforward policies. Path I pre-fuses joint states with high-precision end-effector IMU data at the encoder to enhance multimodal state estimation. Path II introduces a time-step-based dynamic weighting injection at the decoder, enabling real-time closed-loop micro-correction during complex physical contacts and boosting fine manipulation success rates by up to 18%.

- Finally, a training-inference synergistic S2F mechanism is designed. By introducing a temporal smooth loss during training and an adaptive filter based on action change rates during inference, it effectively mitigates execution jitter, yielding an additional success rate increase of up to 10% for fine tasks.

Evaluated across 10 complex simulated and real-world manipulation tasks, NeuroSPS outperforms current mainstream strategies with an average success rate of 74.6%. Compared to the ACT baseline, it improves task success rates by an average of 21% (up to 35%), thoroughly validating its high generalization capability and execution robustness in unstructured environments.